What Is AIO Readiness — and Why Your Website Probably Isn't

The Search Shift Nobody Warned You About

Something changed quietly in how buyers find software vendors. Senior engineers and procurement leads are increasingly asking ChatGPT, Claude, or Perplexity to recommend tools — not typing into Google. If your website isn't structured for AI agents to read and cite, you don't show up. It doesn't matter how good your product is.

This is what AIO readiness — AI Optimization readiness — actually means: not whether your site looks good to humans, but whether it's legible to machines that are actively summarizing, ranking, and recommending vendors on your behalf.

What AI Agents Actually Look At

When a language model crawls your site (assuming you let it), it's not admiring your hero section. It's trying to answer questions like: what does this company do, who do they serve, what do they charge, and can I trust the content enough to cite it?

The signals that answer those questions are technical and mostly invisible to your users:

- Schema.org markup — structured JSON-LD that tells AI agents you're an Organization, what services you offer, and at what price points

llms.txt— an emerging standard (similar torobots.txt) that gives LLMs a plain-English guide to your site's content and how to use it- robots.txt directives — whether you're explicitly allowing or blocking crawlers like GPTBot, ClaudeBot, and PerplexityBot

- Semantic HTML — proper use of

<header>,<main>,<section>, and heading hierarchy so content can be parsed without executing JavaScript - Content accessibility — whether your core value proposition is readable without JS, and whether your metadata aligns with your actual headings

Most sites — including ours, before we fixed it — fail several of these silently.

We Ran the Audit on Ourselves

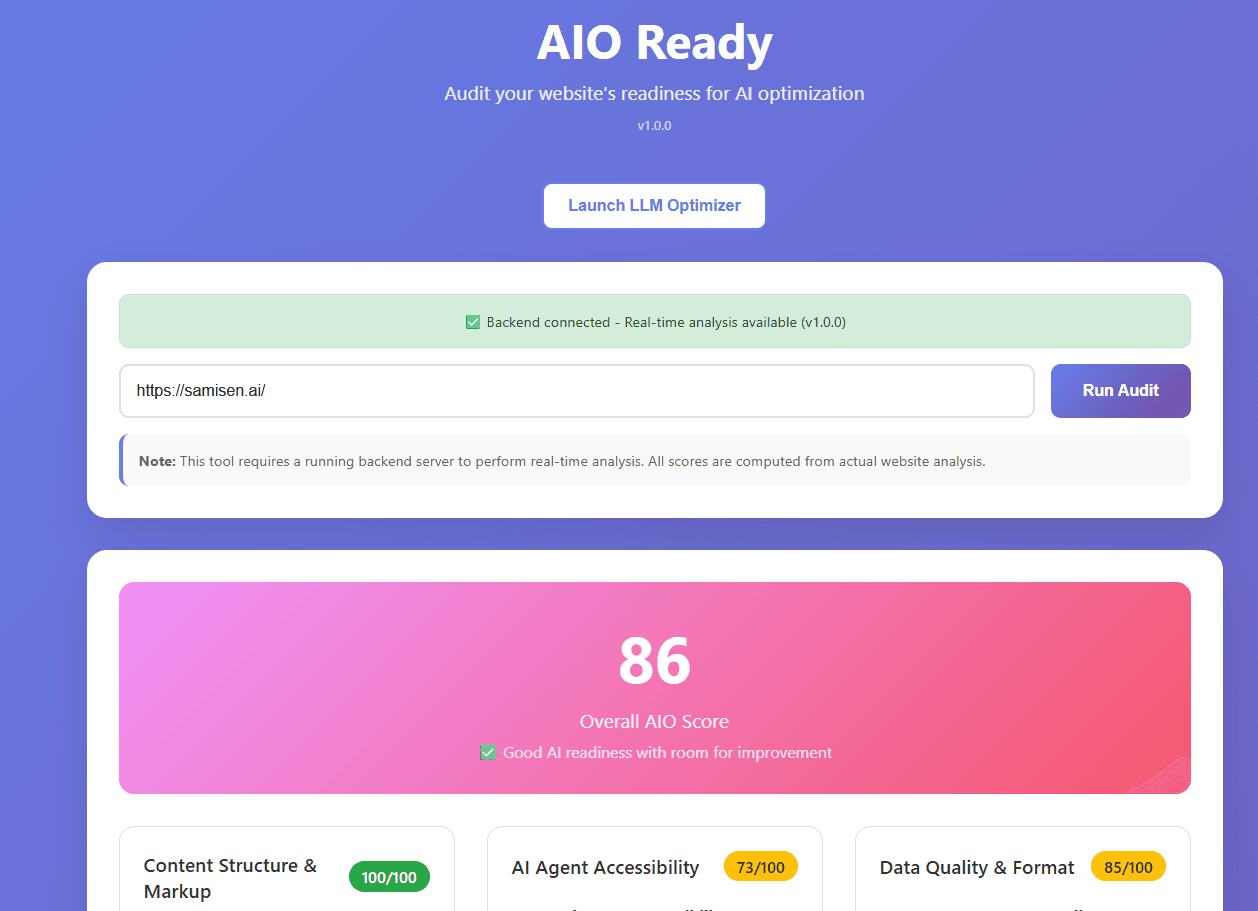

We built two free tools to assess and improve AIO readiness: AIO Ready for scoring your site across eight categories, and LLM Optimizer for generating AI-ready Schema.org markup from any URL.

Then we pointed them at samisen.ai.

The first run scored us a 69 out of 100 — poor AI readiness. The specific failures were concrete:

- No

llms.txtfile - No Schema.org structured data (the structured data score was 35/100)

- Missing security headers that affect AI agent trust signals

- No explicit AI bot directives in

robots.txt

We fixed each item. Added llms.txt. Generated rich JSON-LD markup using LLM Optimizer — including Organization, WebSite, and a full OfferCatalog covering each service with price ranges and delivery timelines. Updated robots.txt. Added the missing security headers.

The third audit run scored 86 out of 100. Content structure and accessibility hit 100/100. The remaining gap is one persistent issue: our robots.txt still blocks several AI crawlers by name. That's the next fix.

The point isn't the score. The point is that a 69 → 86 improvement happened in a single afternoon, with no paid tools, no agency, and no infrastructure changes.

The Remaining Gap: AI Bot Access

The one issue that persisted across all three audit runs — and the one worth flagging explicitly — is AI crawler blocking. Despite having llms.txt and structured data in place, our robots.txt was still issuing Disallow rules for GPTBot, ClaudeBot, PerplexityBot, anthropic-ai, and CCBot.

This is a common artifact of security-first robots.txt templates that block everything by default. The fix is straightforward — add explicit Allow directives for the crawlers you want to index your content:

User-agent: GPTBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: anthropic-ai

Allow: /

If you've done everything else right but left these blocked, you've built a well-labeled store with the door locked.

Why This Doesn't Have to Cost Anything

Most AIO readiness advice leads to a paid audit, a retainer, or a consultant's day rate. The problems it surfaces are almost never that exotic. The fixes are JSON in a <script> tag, a text file in your public directory, and four lines in robots.txt.

AIO Ready and LLM Optimizer are free because the goal is to make this accessible — not to gate it behind a discovery call. Run the audit against your site, read what fails, and use the LLM Optimizer to generate the Schema.org markup you're missing. Both tools take under five minutes to run.

The websites that show up in AI-generated recommendations won't necessarily be the biggest or the best-funded. They'll be the ones that made it easy for AI agents to understand what they do.

What does your site score? Run it at aio.samisen.ai — we're curious what the common failure patterns look like across event tech.